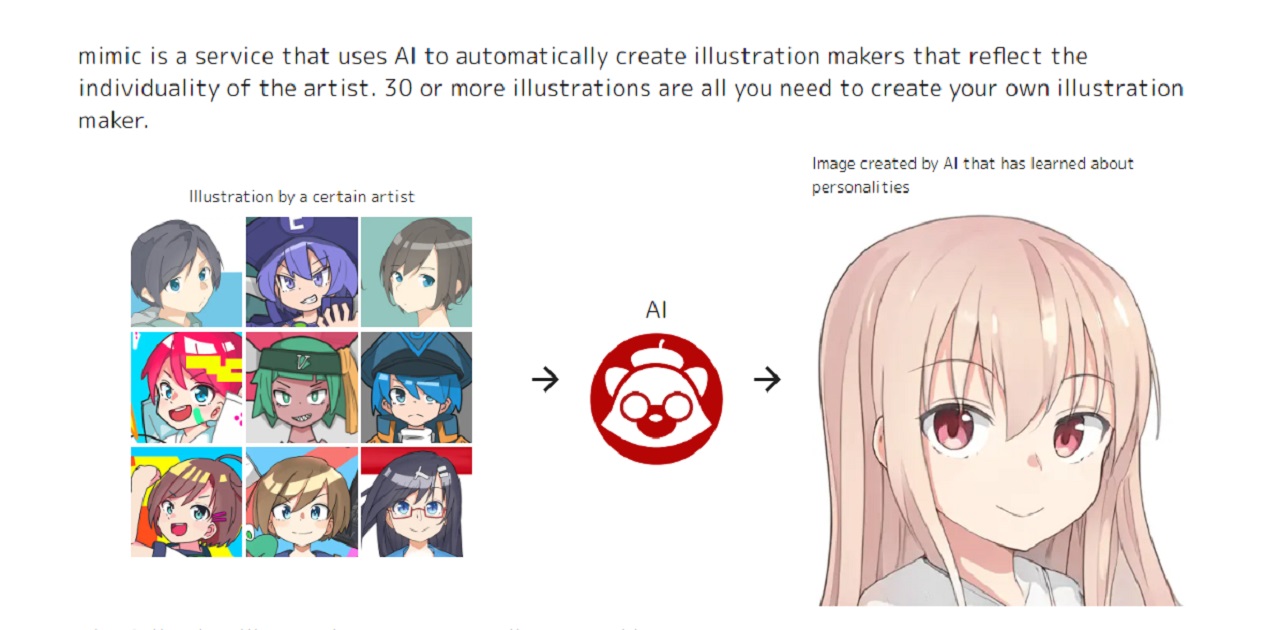

RADIUS5 has announced the future plans for mimic. It’s an AI-powered image generating service where users upload their own illustrations, and the service’s AI then learns from the images in order to create new illustrations of a similar style. The beta version of the service was released in August, but after many users pointed out the high possibility of the service being misused, it was quickly suspended so that it could be improved (related article).

The announcement details the new mimic beta version 2.0, which will incorporate stronger measures to prevent fraudulent use of the service. The new version of the service is expected to launch sometime during October.

RADIUS5 defined fraudulent uses of the service as “uploading images for which one does not own the rights nor have permission to use” and “using images published on mimic in ways that go outside the intended scope of use.” In order to combat these activities, prospective users of the service will have their Twitter accounts examined according to the mimic account examination guidelines. Following the examination, only accounts that RADIUS5 determines are uploading their own images will be able to make use of the service.

Further details regarding the mimic account examination guidelines are being withheld so that malicious users cannot try to find a workaround; however, some criteria include whether they can confirm activity on the Twitter account; whether they can confirm that the user is the actual person who created the art posted on Twitter or any other illustration site that is linked to that Twitter account; and whether the user has been staying away from performing actions like using violent or anti-social expressions, or promoting suicide or self-harm.

Due to the strict criteria that have been put in place, there will be instances where the account evaluation takes a significant amount of time.

These measures could be said to exist so that only users who create illustrations on a regular basis are able to use the service. It should also be noted that even if a user satisfies all the conditions described above, it does not necessarily guarantee that they will pass the screening.

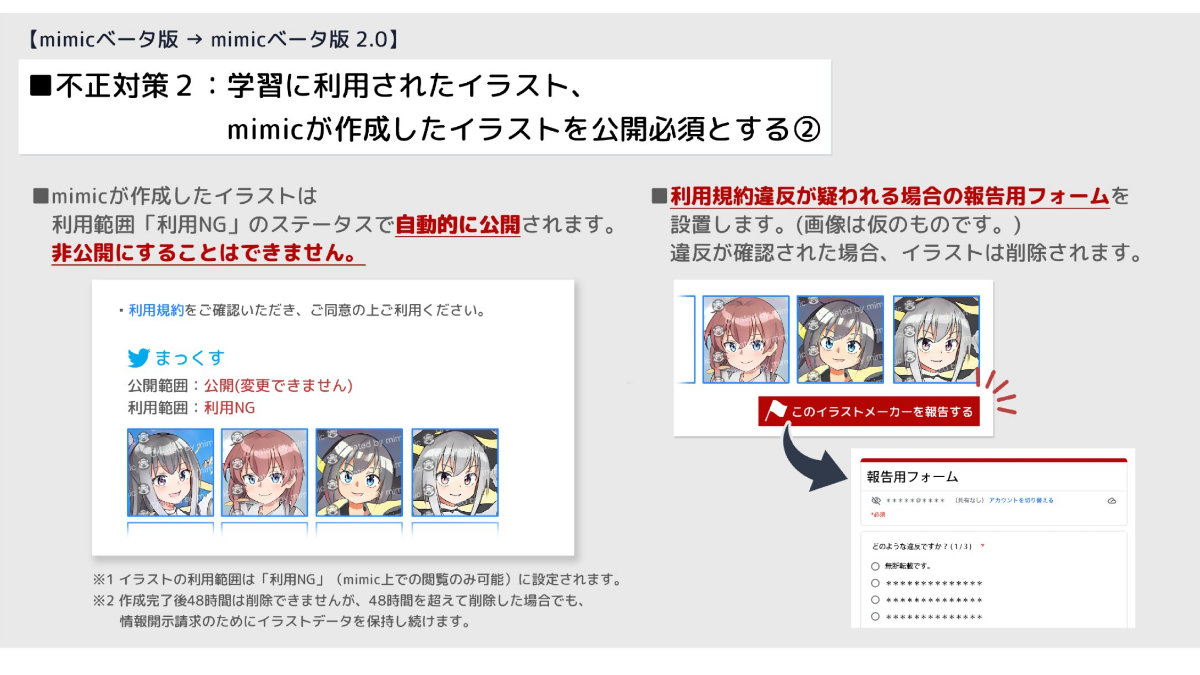

Another measure that is being introduced to maintain transparency is the requirement that all images used as learning material by the AI and all images created by the service must be made public. Images cannot be made private and cannot be deleted within the 48-hour period following their creation.

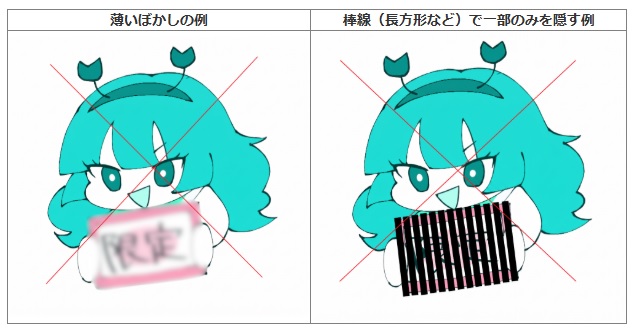

Furthermore, images used for AI learning and those generated by mimic will be published with a big watermark. Specifics have not been revealed, but information that can be used to trace the image is expected to be included. This is believed to be a function that will help prevent later misuse of images created through the service.

The pages for the illustrations will include links to the Twitter accounts of the creators, and there will also be a form that lets you report suspected violations of the terms of use. It is explained that those who do not want their images published publicly should not upload them to the site.

All images uploaded to or created by the service will become available for other users to see.This allows a user to check whether their own artwork is being used without permission and also makes it easier to see what the images generated by the service are being used for.

The company has stated that they will keep an eye on the situation and make further changes in the future as necessary.

The announcement of beta version 2.0 and all of the changes it includes has been met with a positive reception online. Although the number of people who can use the service will be more limited than before, it brings the service more in line with its original purpose of assisting creators in producing content. It is difficult for creators to use a service like this with peace of mind if they believe it may cause issues, but it’s clear that the company is actively taking steps in an attempt to alleviate any concerns regarding misuse of the service.

Written by. Marco Farinaccia based on the original Japanese article (original article’s publication date: 2022-09-15 17:34 JST)